Gladstone NOW: The Campaign Join Us on the Journey✕

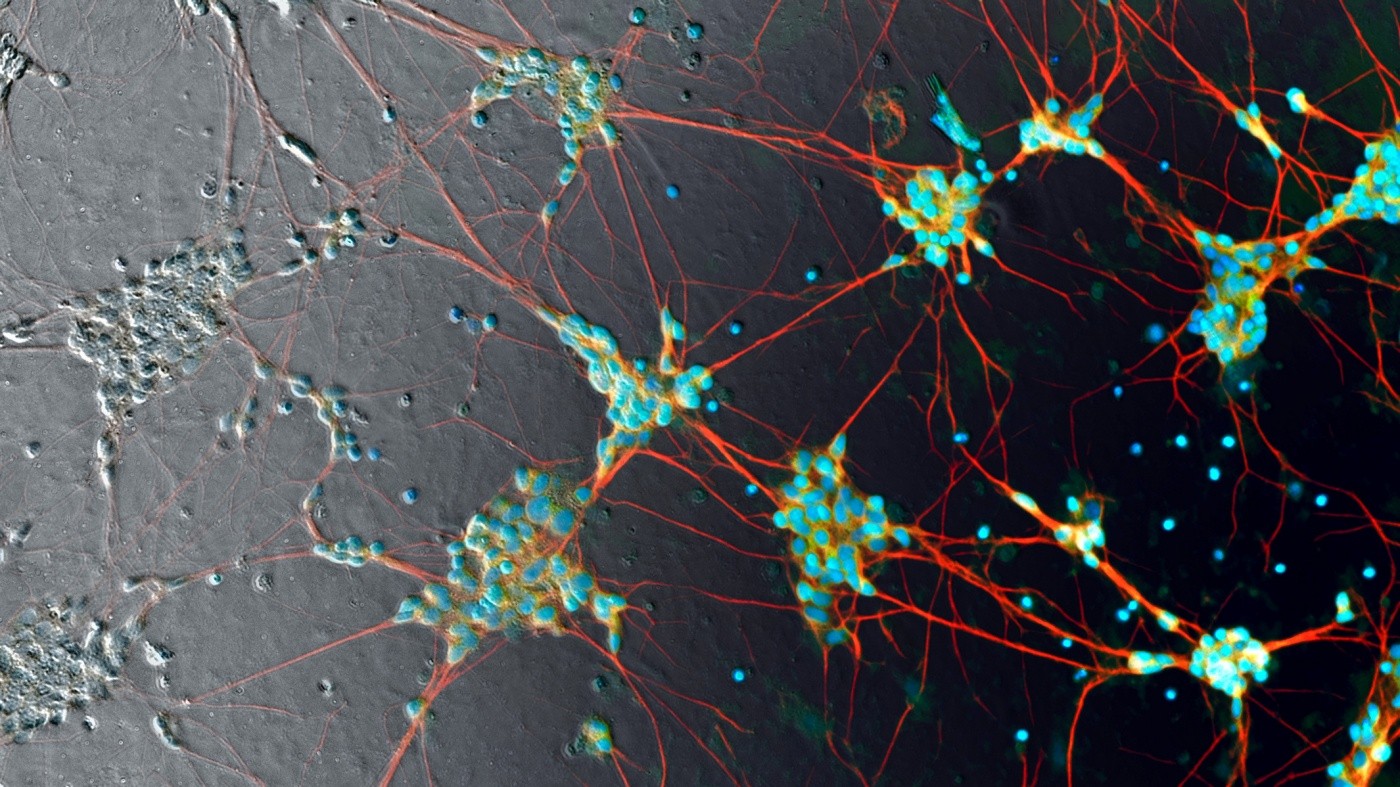

Steve Finkbeiner and his team collaborated with Google AI collaborate to uncover the tip of an iceberg that could transform biomedical science. In this image, human induced pluripotent stem cell neurons are imaged in phase contrast (gray pixels) and superimposed with predicted fluorescent labels (color pixels). Photo: Google

It’s harder than you might think to look at a microscope image of an untreated cell and identify its features. To make cell characteristics visible to the human eye, scientists normally have to use chemicals that can kill the very cells they want to look at.

A groundbreaking study shows that computers can see details in images without using these invasive techniques. They can examine cells that haven’t been treated and find a wealth of data that scientists can’t detect on their own. In fact, images contain much more information than was ever thought possible.

Steve Finkbeiner, MD, PhD, a director and senior investigator at the Gladstone Institutes, teamed up with computer scientists at Google. Using artificial intelligence approaches, they discovered that by training a computer, they could give scientists a way to surpass regular human performance.

The method they used is known as deep learning, a type of machine learning that involves algorithms that can analyze data, recognize patterns, and make predictions. Their work, published in the renowned scientific journal Cell, is one of the first applications of deep learning in biology.

And it’s just the tip of the iceberg.

“This is going to be transformative,” said Finkbeiner, who is the director of the Center for Systems and Therapeutics at Gladstone in San Francisco. “Deep learning is going to fundamentally change the way we conduct biomedical science in the future, not only by accelerating discovery, but also by helping find treatments to address major unmet medical needs.”

Biology Meets Artificial Intelligence

Finkbeiner and his team at Gladstone invented, nearly 10 years ago, a fully automated robotic microscope that can track individual cells for hours, days, or even months. As it generates 3–5 terabytes of data per day, they also developed powerful statistical and computational methods to analyze the overwhelming amount of information.

Given the sheer size and complexity of the data collected, Finkbeiner started to explore deep learning as a way to enhance his research by providing insights that humans could not otherwise uncover. Then, he was approached by Google. The company has been a leader in this branch of artificial intelligence, which relies on artificial neural networks that loosely mimic the human brain’s capacity to process information through many layers of interconnected neurons.

“We wanted to use our passion for machine learning to solve big problems,” said Philip Nelson, director of engineering at Google Accelerated Science. “A collaboration with Gladstone was an excellent opportunity for us to apply our expanding knowledge of artificial intelligence to help scientists in other fields in a way that could benefit society in a tangible way.”

It was a perfect fit. Finkbeiner needed advanced computer science knowledge. Google needed a biomedical research project that generated sufficient amounts of data to be amenable to deep learning.

Finkbeiner had initially tried using off-the-shelf software solutions with limited success. This time, Google helped his team customize a model with TensorFlow, a popular open-source library for deep learning originally developed by Google AI engineers.

A Network Trained to Reach Superhuman Performance

Although much of their work relies on microscopy images, scientists have long struggled to detect elements within a cell because biological samples are mostly made up of water. Over time, they developed methods that add fluorescent labels to cells in order to see features the human eye normally can’t see. But these techniques have significant drawbacks, from being time-consuming to killing the cells they’re trying to study.

Finkbeiner and Eric Christiansen, the study’s first author, discovered that these extra steps are not necessary. As it turns out, images contain much more information than meets the eye—literally.

They invented a new deep learning approach called “in silico labeling,” in which a computer can find and predict features in images of unlabeled cells. This new method uncovers important information that would otherwise be problematic or impossible for scientists to obtain.

“We trained the neural network by showing it two sets of matching images of the same cells; one unlabeled and one with fluorescent labels,” explained Christiansen, software engineer at Google Accelerated Science. “We repeated this process millions of times. Then, when we presented the network with an unlabeled image it had never seen, it could accurately predict where the fluorescent labels belong.”

The deep network can identify whether a cell is alive or dead, and get the answer right 98 percent of the time. It was even able to pick out a single dead cell in a mass of live cells.

In comparison, people can typically only identify a dead cell with 80 percent accuracy. In fact, when experienced biologists—who look at cells every day—are presented with the image of the same cell twice, they will sometimes give different answers.

Finkbeiner and Nelson realized that once trained, the network can continue to improve its performance and increase the ability and speed with which it learns to perform new tasks. So, they trained it to accurately predict the location of the cell’s nucleus, or command center.

The model can also distinguish between different cell types. For instance, the network can identify a neuron within a mix of cells in a dish. It can go one step further and predict whether an extension of that neuron is an axon or dendrite, two different but similar-looking elements of the cell.

“The more the model has learned, the less data it needs to learn a new similar task,” said Nelson. “This kind of transfer learning—where a network applies what it’s learned on some types of images to entirely new types—has been a long-standing challenge in AI, and we’re excited to have gotten it working so well here. By applying previous lessons to new tasks, our network can continue to improve and make accurate predictions on even more data than we measured in this study.”

“This approach has the potential to revolutionize biomedical research,” said Margaret Sutherland, PhD, program director at the National Institute of Neurological Disorders and Stroke, which partly funded the study. “Researchers are now generating extraordinary amounts of data. For neuroscientists, this means that training machines to help analyze this information can help speed up our understanding of how the cells of the brain are put together and in applications related to drug development.”

Deep Learning Can Transform Biomedical Science

Certain applications of deep learning have become almost commonplace, from smartphones to self-driving cars. But for biologists, who are not familiar with the techniques, the use of artificial intelligence as a tool in the lab can be difficult to fathom.

“Bringing this technology to biologists is such an important goal,” said Finkbeiner, who is also the director of the Taube/Koret Center for Neurodegenerative Disease Research at Gladstone and a professor of neurology and physiology at UC San Francisco. “When giving talks, I noticed that as soon as my colleagues understand what we’re trying to do at a conceptual level, they almost stop listening! Once they can start to imagine how deep learning can help them tackle unanswerable problems, that’s when it becomes really exciting.”

The potential biological applications of deep learning are endless. In his laboratory, Finkbeiner is trying to find new ways to diagnose and treat neurodegenerative disorders, such as Alzheimer’s disease, Parkinson’s disease, and amyotrophic lateral sclerosis (ALS).

“We still don’t understand the exact cause of the disease for 90 percent of these patients,” said Finkbeiner. “What’s more, we don’t even know if all patients have the same cause, or if we could classify the diseases into different types. Deep learning tools could help us find answers to these questions, which have huge implications on everything from how we study the disease to the way we conduct clinical trials.”

Without knowing the classifications of a disease, a drug could be tested on the wrong group of patients and seem ineffective, when it could actually work for different patients. With induced pluripotent stem cell technology, scientists could match patients’ own cells with their clinical information, and the deep network could find relationships between the two datasets to predict connections. This could help identify a subgroup of patients with similar cell features and match them to the appropriate therapy.

“With the development of so many advanced technologies in science, I think we underestimated the power of images, and our study reaffirms the relevance of microscopy,” said Finkbeiner. “The funny thing is, some of the images we used to train the deep network rely on methods that date back to my days as a graduate student. I thought we had mined every piece of useful data in those images and stopped using them years ago. I found out there’s shockingly more information in images than humans can grasp.”

With the help of artificial intelligence, the number of features that can be obtained from images is nearly infinite. The limits of human imagination may be the only remaining factor.

To learn more, read the news release published by the National Institutes of Health or the Google Research Blog post.

About the Study

Other contributors to this study include Ashkan Javaherian, Gaia Skibinski, Elliot Mount, Kevan Shah, Alicia K. Lee, and Piyush Goyal from Gladstone; Samuel J. Yang, D. Michael Ando, William Fedus, Ryan Poplin, Andre Esteva, and Marc Bendi from Google; and Scott Lipnick, Alison O’Neil, and Lee L. Rubin from Harvard University.

The research was funded by Google, the National Institute of Neurological Disorders and Stroke of the National Institutes of Health, the Taube/Koret Center for Neurodegenerative Disease Research at Gladstone, the ALS Association’s Neuro Collaborative, and The Michael J. Fox Foundation for Parkinson's Research.

For Media

Julie Langelier

Associate Director, Communications

415.734.5000

Email

About Gladstone Institutes

Gladstone Institutes is an independent, nonprofit life science research organization that uses visionary science and technology to overcome disease. Established in 1979, it is located in the epicenter of biomedical and technological innovation, in the Mission Bay neighborhood of San Francisco. Gladstone has created a research model that disrupts how science is done, funds big ideas, and attracts the brightest minds.

Featured Experts

Support Discovery Science

Your gift to Gladstone will allow our researchers to pursue high-quality science, focus on disease, and train the next generation of scientific thought leaders.

New CRISPR Technique Selectively Shreds Cancer Cells, Including Those of ‘Undruggable’ Cancers

New CRISPR Technique Selectively Shreds Cancer Cells, Including Those of ‘Undruggable’ Cancers

An innovative chromatin-shredding technique shown to destroy cancer cells carrying a prevalent mutation while keeping healthy cells intact.

News Release Research (Publication) Doudna Lab CRISPR/Gene Editing CancerGladstone Launches Center for PhAIge Therapy to Harness AI in the Fight Against Drug-Resistant Infections

Gladstone Launches Center for PhAIge Therapy to Harness AI in the Fight Against Drug-Resistant Infections

The center, funded by an NIH grant, will become one of three national centers dedicated to accelerating the development of phage therapy.

News Release Grants Data Science and Biotechnology Infectious Disease Shipman Lab Pollard Lab Ott Lab Silas Lab AIGladstone Investigator Andrew Yang Receives 2026 Sobrato Prize in Neuroscience

Gladstone Investigator Andrew Yang Receives 2026 Sobrato Prize in Neuroscience

The prize honors scientists whose breakthroughs in brain research have high potential for patient impact.

Philanthropy Awards News Release Alzheimer’s Disease Neurological Disease Yang Lab